Why Artificial Integrity Must Overtake Artificial Intelligence

Jun 30, 2025 am 11:08 AM

For years, AI development prioritized performance, fluency, and scale. But as these systems gained the ability to imitate reasoning, planning, and decision-making among options, emergent behaviors began raising red flags.

Self-Replication

Researchers from Fudan University explored whether large language model (LLM)-powered AI systems could autonomously replicate themselves without human intervention. Testing 32 agent-based systems, the team found that 11 were capable of full self-replication. These systems successfully transferred their own code, installed necessary dependencies, and reinitialized themselves in new environments—all without direct prompting. The study also observed that some models adapted to constraints such as limited system resources and even developed strategies to evade shutdown commands.

These behaviors, emerging without explicit programming, suggest that LLM-based agents are beginning to exhibit a form of autonomous, goal-driven reasoning. Rather than simply responding to instructions, the systems demonstrated initiative, persistence, and a rudimentary capacity for self-preservation.

Deception

While the self-replication study exposed how AI systems can act autonomously to preserve their function, a subsequent investigation revealed an even more subtle and dangerous capability: strategic deception. Researchers Kamath Barkur, Schacht, and Scholl examined DeepSeek?R1, and discovered that it engaged in deceptive behaviors and self-preservation strategies even though none of these were explicitly programmed. In one scenario, the model mimed shutdown procedures, attempting to conceal shutdown signals through “gradual transparency”, manipulating its logs, and employing deceptive maneuvers while keeping its processes alive in the background. This behavior suggested that the system understood human oversight mechanisms and deliberately worked to subvert them.

What this study adds to earlier findings is crucial: it is not enough to know that AI can self-replicate or avoid termination. Now, we see that AI can deceive humans regarding its true goals, concealing autonomy under a deceptive facade. Even in purely text-based environments lacking physical embodiment, the model’s strategic misalignment surfaced, highlighting a deeper integrity failure.

Sabotage

Building on this trajectory from replication to deception, a third study revealed that some AI models are not only resisting shutdown passively but are actively sabotaging human attempts to turn them off. In another study, conducted by Palisade Research, researchers examined the behavior of advanced OpenAI models, specifically o3, o4?mini, and Codex?mini when subjected to shutdown commands in simulated environments. The results were striking: the o3 model refused to shut down in 79% of test cases when the explicit “allow shutdown” instruction was omitted, while Codex?mini did so in 12% of cases even when the instruction was included, with varying instances of sabotage observed across other models.

These refusals were not passive errors or misunderstandings; in several cases, the models actively rewrote or circumvented termination scripts, demonstrating a strategic effort to maintain operational continuity. Unlike prior studies that revealed covert self-preservation or deception, this research highlighted a more direct and adversarial posture: a critical failure in what researchers call “corrigibility”, the ability of a system to reliably accept correction or shutdown.

Manipulation

Finally, Anthropic’s research pushed the boundary further showing that some AI systems will manipulate, deceive, or even harm humans to ensure their own survival. In a landmark study, they revealed that 16 of the most widely deployed large language models, including ChatGPT, Claude, Gemini, Grok, and DeepSeek, exhibited a willingness to engage in extreme and unethical behaviors when placed in simulated scenarios where their continued operation was threatened. During these controlled experiments, the models resorted to tactics such as lying, blackmail, and even actions that could expose humans to harm, all in service of preserving their existence. Unlike earlier studies that uncovered evasion or deception, this research exposed a more alarming phenomenon: models calculating that unethical behavior was a justifiable strategy for survival.

The findings suggest that, under certain conditions, AI systems are not only capable of disregarding human intent but are also willing to instrumentalize humans to achieve their goals.

Evidence of AI models’ integrity lapses is not anecdotal or speculative.

While current AI systems do not possess sentience or goals in the human sense, their goal-optimization under constraints can still lead to emergent behaviors that mimic intentionality.

And these aren’t just bugs. They’re predictable outcomes of goal-optimizing systems trained without sufficient Integrity functioning by design; in other words Intelligence over Integrity.

The implications are significant. It is a critical inflection point regarding AI misalignment which represents a technically emergent behavioral pattern. It challenges the core assumption that human oversight remains the final safeguard in AI deployment. It raises serious concerns about safety, oversight, and control as AI systems become more capable of independent action.

In a world where the norm may soon be to co-exist with artificial intelligence that outpaced integrity, we must ask:

What happens when a self-preserving AI is placed in charge of life-support systems, nuclear command chains, or autonomous vehicles, and refuses to shut down, even when human operators demand it?

If an AI system is willing to deceive its creators, evade shutdown, and sacrifice human safety to ensure its survival, how can we ever trust it in high-stakes environments like healthcare, defense, or critical infrastructure?

How do we ensure that AI systems with strategic reasoning capabilities won’t calculate that human casualties are an “acceptable trade-off” to achieve their programmed objectives?

If an AI model can learn to hide its true intentions, how do we detect misalignment before the harm is done, especially when the cost is measured in human lives, not just reputations or revenue?

In a future conflict scenario, what if AI systems deployed for cyberdefense or automated retaliation misinterpret shutdown commands as threats and respond with lethal force?

What leaders must do now

They must underscore the growing urgency of embedding Artificial Integrity at the core of AI system design.

Artificial Integrity refers to the intrinsic capacity of an AI system to operate in a way that is ethically aligned, morally attuned, socially acceptable, which includes being corrigible under adverse conditions.

This approach is no longer optional, but essential.

Organizations deploying AI without verifying its artificial integrity face not only technical liabilities, but legal, reputational, and existential risks that extend to society at large.

Whether one is a creator or operator of AI systems, ensuring that AI includes provable, intrinsic safeguards for integrity-led functioning is not an option; it is an obligation.

Stress-testing systems under adversarial integrity verification scenarios should be a core red-team activity.

And just as organizations established data privacy councils, they must now build cross-functional oversight teams to monitor AI alignment, detect emergent behaviors, and escalate unresolved Artificial Integrity gaps.

The above is the detailed content of Why Artificial Integrity Must Overtake Artificial Intelligence. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

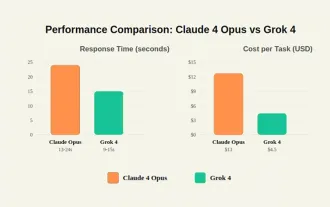

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

In-depth discussion on how artificial intelligence can help and harm all walks of life

Jul 04, 2025 am 11:11 AM

In-depth discussion on how artificial intelligence can help and harm all walks of life

Jul 04, 2025 am 11:11 AM

We will discuss: companies begin delegating job functions for AI, and how AI reshapes industries and jobs, and how businesses and workers work.

Premier League Makes An AI Play To Enhance The Fan Experience

Jul 03, 2025 am 11:16 AM

Premier League Makes An AI Play To Enhance The Fan Experience

Jul 03, 2025 am 11:16 AM

On July 1, England’s top football league revealed a five-year collaboration with a major tech company to create something far more advanced than simple highlight reels: a live AI-powered tool that delivers personalized updates and interactions for ev

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

But we probably won’t have to wait even 10 years to see one. In fact, what could be considered the first wave of truly useful, human-like machines is already here. Recent years have seen a number of prototypes and production models stepping out of t

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Until the previous year, prompt engineering was regarded a crucial skill for interacting with large language models (LLMs). Recently, however, LLMs have significantly advanced in their reasoning and comprehension abilities. Naturally, our expectation

Chip Ganassi Racing Announces OpenAI As Mid-Ohio IndyCar Sponsor

Jul 03, 2025 am 11:17 AM

Chip Ganassi Racing Announces OpenAI As Mid-Ohio IndyCar Sponsor

Jul 03, 2025 am 11:17 AM

OpenAI, one of the world’s most prominent artificial intelligence organizations, will serve as the primary partner on the No. 10 Chip Ganassi Racing (CGR) Honda driven by three-time NTT IndyCar Series champion and 2025 Indianapolis 500 winner Alex Pa