Unlocking Autonomous AI: 7 Methods for Self-Training LLMs

Imagine a future where AI systems learn and evolve without human intervention, much like children mastering complex concepts independently. This isn't science fiction; it's the promise of self-training Large Language Models (LLMs). This article explores seven innovative methods driving this autonomous learning revolution, leading to smarter, faster, and more versatile AI.

Key Takeaways:

- Grasp the concept of human-free LLM training.

- Discover seven distinct autonomous LLM training techniques.

- Understand how each method enhances LLM self-improvement.

- Explore the potential benefits and challenges of these approaches.

- Examine real-world applications of self-trained LLMs.

- Comprehend the impact of self-training LLMs on the future of AI.

- Address the ethical considerations surrounding autonomous AI training.

Table of Contents:

- Introduction

- 7 Autonomous LLM Training Methods

- Self-Supervised Learning

- Unsupervised Learning

- Reinforcement Learning via Self-Play

- Curriculum Learning

- Automated Data Augmentation

- Zero-Shot and Few-Shot Learning

- Generative Adversarial Networks (GANs)

- Conclusion

- Frequently Asked Questions

7 Autonomous LLM Training Methods:

Let's delve into the seven key methods enabling human-free LLM training:

1. Self-Supervised Learning:

This foundational method empowers LLMs to generate their own training labels from input data, eliminating the need for manually labeled datasets. For example, by predicting missing words in a sentence, the LLM learns language patterns and context without explicit instruction. This unlocks the potential to train on massive amounts of unstructured data, resulting in more robust and generalized models.

Example: A model predicts the missing word in "The cat sat on the _" (answer: mat). Through iterative refinement, the model hones its understanding of linguistic subtleties.

2. Unsupervised Learning:

Building upon self-supervised learning, unsupervised learning trains LLMs on completely unlabeled data. The LLM independently identifies patterns, clusters, and structures within the data. This is invaluable for uncovering hidden structures in large datasets, enabling LLMs to learn complex language representations.

Example: An LLM analyzes a vast text corpus, grouping words and phrases based on semantic similarity without pre-defined categories.

3. Reinforcement Learning with Self-Play:

Reinforcement learning (RL) involves an agent making decisions within an environment, receiving rewards or penalties. Self-play applies this to LLMs, allowing them to compete against themselves or modified versions. This fosters continuous strategy refinement across diverse tasks like language generation, translation, and conversational AI.

Example: An LLM engages in simulated conversations with itself, optimizing responses for coherence and relevance, thereby improving conversational skills.

4. Curriculum Learning:

Mirroring human education, curriculum learning trains LLMs progressively on tasks of increasing complexity. Starting with simpler tasks and gradually introducing more challenging ones builds a strong foundation before tackling advanced problems. This structured approach minimizes human intervention.

Example: An LLM learns basic grammar and vocabulary before progressing to complex sentence structures and idioms.

5. Automated Data Augmentation:

Data augmentation generates new training data from existing data, a process easily automated to support human-free LLM training. Techniques like paraphrasing, synonym replacement, and sentence inversion create diverse training contexts, maximizing learning from limited data.

Example: The sentence "The dog barked loudly" could be transformed into variations like "The canine vocalised loudly," enriching the LLM's training data.

6. Zero-Shot and Few-Shot Learning:

Zero-shot and few-shot learning enable LLMs to apply existing knowledge to tasks they haven't been explicitly trained for. This reduces the reliance on extensive human-supervised training data. Zero-shot involves tackling a task without prior examples, while few-shot learning utilizes a minimal number of examples.

Example: An LLM proficient in English writing might translate simple Spanish sentences into English with minimal prior Spanish exposure, leveraging its understanding of general language patterns.

7. Generative Adversarial Networks (GANs):

GANs consist of a generator (creating data samples) and a discriminator (evaluating them against real data). The generator continually improves its ability to generate realistic data used for LLM training. This adversarial process requires minimal human oversight, as the models learn from each other.

Example: A GAN generates synthetic text indistinguishable from human-written text, providing supplementary training material for an LLM.

Conclusion:

The pursuit of autonomous LLM training represents a significant leap forward in AI. Methods like self-supervised learning, self-play RL, and GANs empower LLMs to self-train, improving scalability and potentially surpassing traditionally trained models. However, ethical considerations surrounding bias, transparency, and responsible deployment are paramount.

Frequently Asked Questions:

Q1. What's the main advantage of human-free LLM training?

A1. Scalability – LLMs can learn from massive datasets without the need for costly and time-consuming human labeling.

Q2. How does self-supervised learning differ from unsupervised learning?

A2. Self-supervised learning generates labels from the data itself; unsupervised learning uses no labels, focusing on pattern identification.

Q3. Can autonomously trained LLMs outperform traditionally trained models?

A3. Yes, in many cases, self-play or GAN-trained LLMs achieve superior performance through continuous refinement without human bias.

Q4. What are the ethical concerns with autonomous AI training?

A4. Potential biases, lack of transparency, and responsible deployment to prevent misuse are key concerns.

Q5. How does curriculum learning benefit LLMs?

A5. It allows LLMs to build a strong foundation before tackling complex tasks, leading to more efficient and effective learning.

The above is the detailed content of 7 Ways to Train LLMs Without Human Intervention. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

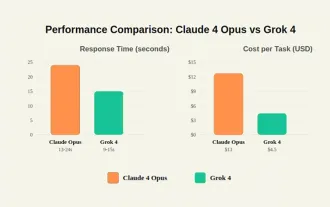

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

But we probably won’t have to wait even 10 years to see one. In fact, what could be considered the first wave of truly useful, human-like machines is already here. Recent years have seen a number of prototypes and production models stepping out of t

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Until the previous year, prompt engineering was regarded a crucial skill for interacting with large language models (LLMs). Recently, however, LLMs have significantly advanced in their reasoning and comprehension abilities. Naturally, our expectation

Build a LangChain Fitness Coach: Your AI Personal Trainer

Jul 05, 2025 am 09:06 AM

Build a LangChain Fitness Coach: Your AI Personal Trainer

Jul 05, 2025 am 09:06 AM

Many individuals hit the gym with passion and believe they are on the right path to achieving their fitness goals. But the results aren’t there due to poor diet planning and a lack of direction. Hiring a personal trainer al

6 Tasks Manus AI Can Do in Minutes

Jul 06, 2025 am 09:29 AM

6 Tasks Manus AI Can Do in Minutes

Jul 06, 2025 am 09:29 AM

I am sure you must know about the general AI agent, Manus. It was launched a few months ago, and over the months, they have added several new features to their system. Now, you can generate videos, create websites, and do much mo

Leia's Immersity Mobile App Brings 3D Depth To Everyday Photos

Jul 09, 2025 am 11:17 AM

Leia's Immersity Mobile App Brings 3D Depth To Everyday Photos

Jul 09, 2025 am 11:17 AM

Built on Leia’s proprietary Neural Depth Engine, the app processes still images and adds natural depth along with simulated motion—such as pans, zooms, and parallax effects—to create short video reels that give the impression of stepping into the sce

These AI Models Didn't Learn Language, They Learned Strategy

Jul 09, 2025 am 11:16 AM

These AI Models Didn't Learn Language, They Learned Strategy

Jul 09, 2025 am 11:16 AM

A new study from researchers at King’s College London and the University of Oxford shares results of what happened when OpenAI, Google and Anthropic were thrown together in a cutthroat competition based on the iterated prisoner's dilemma. This was no