Introduction

Large Language Models (LLMs) are revolutionizing natural language processing, but their immense size and computational demands limit deployment. Quantization, a technique to shrink models and lower computational costs, is a crucial solution. This paper comprehensively explores LLM quantization, examining various methods, their effects on performance, and practical applications across diverse fields. We also discuss challenges and future research directions.

Overview

This paper analyzes:

- How quantization reduces the computational burden of LLMs without significant performance loss.

- The challenges posed by the size and resource needs of advanced LLMs.

- Quantization as a method to discretize continuous values, simplifying LLMs.

- Different quantization methods (post-training and quantization-aware training), and their performance impact.

- The potential of quantized LLMs in edge computing, mobile apps, and autonomous systems.

- Trade-offs, hardware considerations, and the need for ongoing research to improve LLM quantization.

Table of contents

- The Rise of Large Language Models

- LLM Quantization: An In-Depth Look

- Understanding Quantization

- Quantization Methods

- Visualizations

- Quantization's Effect on Model Performance

- Key Metrics

- Applications of Quantized LLMs

- Challenges in LLM Quantization

- Frequently Asked Questions

The Rise of Large Language Models

LLMs represent a major advancement in natural language processing, powering innovative applications. However, their size and computational intensity make deployment on resource-limited devices difficult. Quantization, a technique to reduce model complexity while preserving functionality, offers a promising solution to this problem. This paper provides a thorough examination of LLM quantization, covering its theoretical foundations, practical implementation, and real-world uses. We analyze different quantization methods, their impact on performance, and deployment challenges to provide a complete understanding.

LLM Quantization: An In-Depth Look

Understanding Quantization

Quantization maps continuous values to discrete representations, usually with lower bit-widths. In LLMs, this means reducing the precision of weights and activations from floating-point to lower-bit integers or fixed-point formats. This results in smaller models, faster inference, and reduced memory usage.

Quantization Methods

-

Post-training Quantization:

- Uniform quantization: Maps floating-point values to a fixed number of quantization levels. This is straightforward but can introduce errors, especially with skewed data.

- Dynamic quantization: Adapts quantization parameters during inference based on input statistics. This can improve accuracy but adds computational overhead.

- Weight clustering: Groups weights into clusters, representing each with a central value. This reduces the number of unique weights, saving memory and potentially improving computation.

- Quantization-Aware Training (QAT): Integrates quantization into training, improving performance. Techniques include simulated quantization, the straight-through estimator (STE), and differentiable quantization.

Visualizations

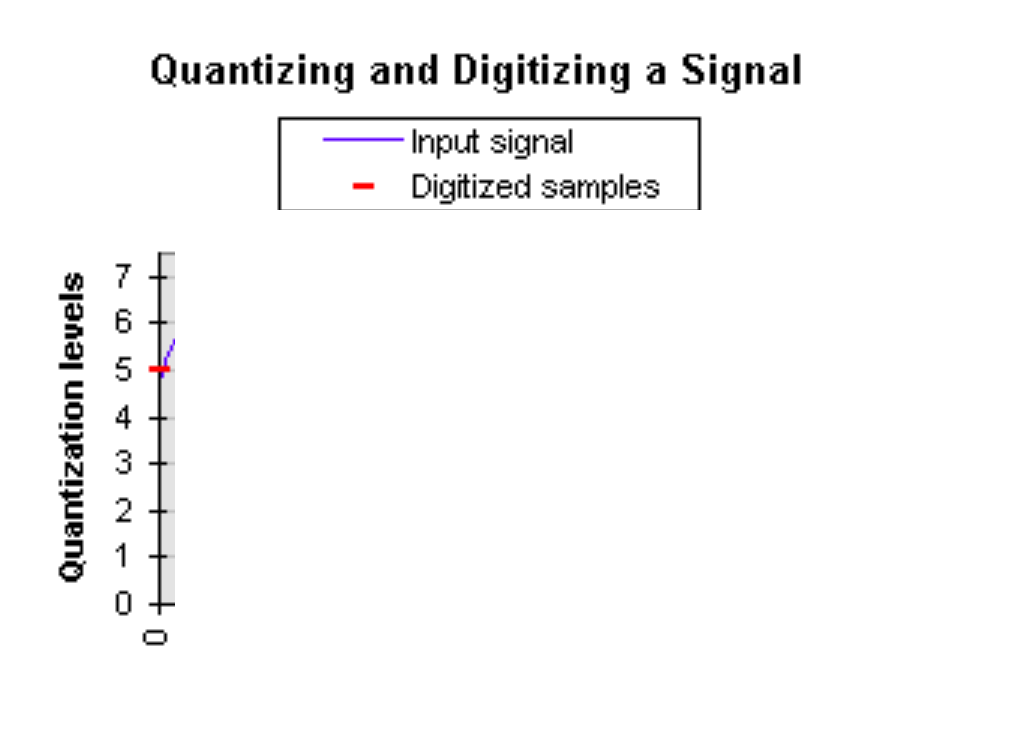

Uniform Quantization:

Illustrates mapping floating-point values to discrete levels.

Dynamic Quantization:

Shows how the quantization range adjusts based on input values.

Weight Clustering:

Depicts grouping weights into clusters represented by central values.

Quantization-Aware Training:

Illustrates the integration of quantization into the training process.

Quantization's Effect on Model Performance

Quantization inevitably reduces performance. The extent of this depends on:

- Model Architecture: Deeper, wider models are more robust to quantization.

- Dataset Size and Complexity: Larger, more complex datasets mitigate performance loss.

- Quantization Bitwidth: Lower bitwidths lead to greater performance drops.

- Quantization Method: The chosen method significantly impacts performance.

Key Metrics

Performance is assessed using:

- Accuracy: Measures performance on a given task (e.g., classification accuracy, BLEU score).

- Model Size: Quantifies the reduction in model size.

- Inference Speed: Evaluates speed improvements from quantization.

- Energy Consumption: Measures the power efficiency of the quantized model.

Applications of Quantized LLMs

Quantized LLMs are transforming various applications:

- Edge Computing: Deploying LLMs on resource-constrained devices for real-time applications.

- Mobile Applications: Improving the performance and efficiency of mobile apps.

- Internet of Things (IoT): Enabling intelligent capabilities on IoT devices.

- Autonomous Systems: Reducing computational costs for real-time decision-making.

- Natural Language Understanding (NLU): Accelerating NLU tasks across various domains.

(Python code snippet for autonomous systems omitted for brevity, but the description remains.)

Challenges in LLM Quantization

Despite its potential, LLM quantization faces challenges:

- Performance-Accuracy Trade-off: Balancing model size reduction with performance degradation.

- Hardware Acceleration: Developing specialized hardware for efficient quantization operations.

- Task-Specific Quantization: Tailoring techniques for different tasks and domains.

Future Research:

- Developing novel quantization methods with minimal performance loss.

- Exploring hardware-software co-design for optimized quantization.

- Investigating the impact of quantization on different LLM architectures.

- Quantifying the environmental benefits of LLM quantization.

Conclusion

LLM quantization is crucial for deploying large language models on resource-limited platforms. By carefully selecting quantization methods, evaluation metrics, and application requirements, practitioners can effectively utilize this technique to achieve optimal performance and efficiency. Continued research promises further advancements, unlocking new possibilities for AI applications.

Frequently Asked Questions

Q1. What is LLM Quantization? It reduces the precision of model weights and activations to lower-bit formats, resulting in smaller, faster, and more memory-efficient models.

Q2. What are the main quantization methods? Post-Training Quantization (uniform and dynamic) and Quantization-Aware Training (QAT).

Q3. What challenges does LLM Quantization face? Balancing performance and accuracy, the need for specialized hardware, and the development of task-specific quantization techniques.

Q4. How does quantization affect model performance? It can degrade performance, but the impact varies depending on model architecture, dataset complexity, and the bitwidth used.

The above is the detailed content of A Comprehensive Guide on LLM Quantization and Use Cases. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

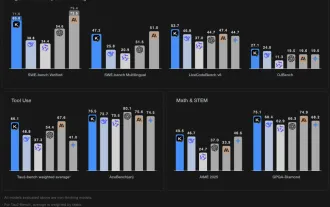

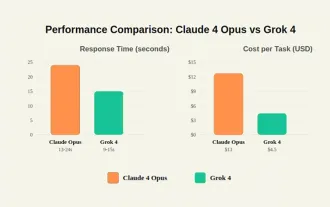

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

In-depth discussion on how artificial intelligence can help and harm all walks of life

Jul 04, 2025 am 11:11 AM

In-depth discussion on how artificial intelligence can help and harm all walks of life

Jul 04, 2025 am 11:11 AM

We will discuss: companies begin delegating job functions for AI, and how AI reshapes industries and jobs, and how businesses and workers work.

Premier League Makes An AI Play To Enhance The Fan Experience

Jul 03, 2025 am 11:16 AM

Premier League Makes An AI Play To Enhance The Fan Experience

Jul 03, 2025 am 11:16 AM

On July 1, England’s top football league revealed a five-year collaboration with a major tech company to create something far more advanced than simple highlight reels: a live AI-powered tool that delivers personalized updates and interactions for ev

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

10 Amazing Humanoid Robots Already Walking Among Us Today

Jul 16, 2025 am 11:12 AM

But we probably won’t have to wait even 10 years to see one. In fact, what could be considered the first wave of truly useful, human-like machines is already here. Recent years have seen a number of prototypes and production models stepping out of t

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Context Engineering is the 'New' Prompt Engineering

Jul 12, 2025 am 09:33 AM

Until the previous year, prompt engineering was regarded a crucial skill for interacting with large language models (LLMs). Recently, however, LLMs have significantly advanced in their reasoning and comprehension abilities. Naturally, our expectation

Chip Ganassi Racing Announces OpenAI As Mid-Ohio IndyCar Sponsor

Jul 03, 2025 am 11:17 AM

Chip Ganassi Racing Announces OpenAI As Mid-Ohio IndyCar Sponsor

Jul 03, 2025 am 11:17 AM

OpenAI, one of the world’s most prominent artificial intelligence organizations, will serve as the primary partner on the No. 10 Chip Ganassi Racing (CGR) Honda driven by three-time NTT IndyCar Series champion and 2025 Indianapolis 500 winner Alex Pa