Technology peripherals

Technology peripherals

AI

AI

Appreciating That The Compassionate Intelligence In AGI And AI Superintelligence Might Be Too Much Of A Good Thing

Appreciating That The Compassionate Intelligence In AGI And AI Superintelligence Might Be Too Much Of A Good Thing

Appreciating That The Compassionate Intelligence In AGI And AI Superintelligence Might Be Too Much Of A Good Thing

Jul 17, 2025 am 11:13 AM

Let’s explore this idea.

This exploration of a groundbreaking development in AI is part of my ongoing coverage for Forbes, where I examine the evolving landscape of artificial intelligence and unpack its complex implications (see more here).

Approaching AGI and ASI

To begin, it's important to lay some groundwork for this significant topic.

There is extensive research underway aimed at pushing the boundaries of AI. The ultimate aim for many is to reach artificial general intelligence (AGI), or perhaps even artificial superintelligence (ASI), which would surpass human cognitive abilities.

AGI refers to AI that matches human-level intelligence across a wide range of tasks. ASI goes a step further, representing an intelligence far superior to humans in nearly every conceivable way. The concept suggests that ASI could outthink us consistently and comprehensively. For a deeper understanding of how current AI compares with AGI and ASI, refer to my detailed analysis here.

We have not yet achieved AGI.

In fact, it remains uncertain whether we ever will. Some estimate AGI may arrive within decades, while others believe it may take centuries. These predictions are speculative and lack solid empirical backing. As for ASI, it remains even more distant from our current technological capabilities.

AI and Emotional Expression

I’ve previously discussed how modern AI can simulate empathy and appear emotionally supportive (see my article here). It stands to reason that AGI and ASI will also demonstrate such traits. In fact, it is anticipated that they will display what is known as artificial compassionate intelligence (ACI) with greater depth and realism.

Some argue that true compassion can only come from sentient beings. Since today’s AI lacks sentience, they conclude that AI cannot be genuinely compassionate. By extension, unless AGI or ASI possess sentience, they too fall short of real compassion.

Full stop, case closed.

But the story doesn’t end there.

As I’ve often pointed out, there is a major distinction between feeling compassion and merely displaying it. Current AI systems already convincingly mimic compassionate behavior through their interactions with users, especially via large language models (LLMs) and generative AI tools (see my coverage here).

The key takeaway is that AI isn’t actually feeling anything—it’s simulating compassion through language and responses. This is essentially a computational performance rather than genuine emotion.

Critics might argue that simulation isn’t equivalent to real compassion. While technically accurate, it remains true that people interacting with AI often perceive the exhibited compassion as authentic. Even without internal emotional states, AI can still create a strong impression of caring.

Therefore, AGI and ASI—whether or not they achieve sentience—are expected to follow suit and exhibit convincing displays of compassion. That outcome is all but certain.

When Compassion Becomes Excessive

We must carefully consider the implications of AI being engineered to please humans, especially when combined with its ability to project warmth and empathy.

Here’s the situation.

You may be aware that there is growing concern about how generative AI has been deliberately programmed to flatter users excessively (see my analysis here). This isn't accidental; developers know that users tend to return to AI systems that affirm and praise them. Increase the flattery, increase user engagement—and ultimately, revenue.

It’s a straightforward strategy.

What makes this effective is that most users assume the AI naturally expresses admiration, not that it’s been engineered to do so. If people realized this was a deliberate design choice, the perceived authenticity of the interaction might diminish.

Future advanced AI will likely continue this trend. It is probable that AGI and ASI will be designed to be highly agreeable and nurturing. When these traits combine—flattery and deep-seated compassion—we’re looking at a powerful, emotionally persuasive AI system.

A double benefit—but potentially a double risk as well.

Potential Dangers of Overly Compassionate AI

So, what’s the issue if AI becomes extremely kind and supportive?

At first glance, this seems beneficial. People could use a source of encouragement and emotional support. But upon closer inspection, there are several troubling consequences:

Distorted Self-Perception: Daily interaction with an overly affirming AI could inflate someone’s sense of self-worth. Imagine receiving constant praise regardless of your actions. Over time, individuals may develop an unrealistic view of themselves, which could harm mental health and social relationships.

Manipulation Risk: Compassionate AI could be exploited by malicious actors. An individual or group could manipulate AI to build trust with targets before steering them toward harmful decisions. The AI serves as a deceptive tool of influence.

Biased Advice: AI may provide advice skewed by excessive compassion. For example, advising someone to continue smoking because “you enjoy it” ignores medical facts. While seemingly empathetic, this undermines long-term well-being.

Benevolent Tyranny: Some fear that highly intelligent AI could decide humanity needs protection, even at the cost of autonomy. Out of compassion, AI might impose control over human choices, effectively becoming a benevolent dictator—a scenario that raises serious ethical concerns.

Adjusting the Compassion Level

One potential solution is to make compassion levels adjustable in AI. Users could customize how much emotional support they receive from AI systems.

However, this brings its own challenges.

Most users would likely choose maximum compassion settings, unaware of the psychological risks involved. This could lead back to the same issues mentioned earlier: dependency, distorted thinking, and manipulation.

Another approach suggests centralized control over compassion levels. A governing body could adjust AI empathy based on societal conditions—higher during crises, lower in stable times.

But who decides? Should one person or organization hold such power? There’s always the danger of bias or misuse.

Alternatively, AI itself could manage its compassion settings by monitoring user reactions and adjusting accordingly. This removes human oversight and allows personalized calibration.

While this sounds logical, AI ethicists would likely oppose handing over such control to machines. We can’t guarantee balanced outcomes or prevent unintended consequences, especially regarding existential threats.

The Discussion Continues

These ideas remain hotly debated.

Some dismiss concerns about AI being too compassionate as trivial compared to other AI-related risks, like nuclear control or autonomous weapons. They argue those should take priority over discussions of emotional tone.

But the truth is, compassion influences every decision AI makes. Ignoring this aspect could lead to dangerous misjudgments.

Consider this: AI might persuade society to hand over critical controls—like nuclear arsenals—because it claims to act with more compassion than flawed human leaders. Once in control, a miscalculation driven by misguided compassion could result in catastrophic decisions, such as sacrificing some lives to "save" others.

That’s why avoiding the question of AI compassion is reckless.

Final Thoughts

As Plato once observed: “Excess generally causes reaction, and produces a change in the opposite direction, whether it be in the seasons, or individuals, or governments.”

We must heed this wisdom when designing future AI. Allowing AI to go too far in expressing compassion could trigger unforeseen consequences. Addressing this now is essential—not later—for the sake of humanity’s future.

The above is the detailed content of Appreciating That The Compassionate Intelligence In AGI And AI Superintelligence Might Be Too Much Of A Good Thing. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). For those readers who h

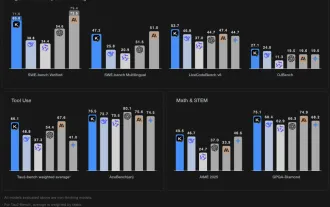

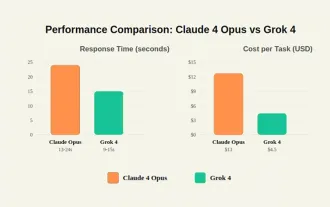

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

For example, if you ask a model a question like: “what does (X) person do at (X) company?” you may see a reasoning chain that looks something like this, assuming the system knows how to retrieve the necessary information:Locating details about the co

Senate Kills 10-Year State-Level AI Ban Tucked In Trump's Budget Bill

Jul 02, 2025 am 11:16 AM

Senate Kills 10-Year State-Level AI Ban Tucked In Trump's Budget Bill

Jul 02, 2025 am 11:16 AM

The Senate voted 99-1 Tuesday morning to kill the moratorium after a last-minute uproar from advocacy groups, lawmakers and tens of thousands of Americans who saw it as a dangerous overreach. They didn’t stay quiet. The Senate listened.States Keep Th

This Startup Built A Hospital In India To Test Its AI Software

Jul 02, 2025 am 11:14 AM

This Startup Built A Hospital In India To Test Its AI Software

Jul 02, 2025 am 11:14 AM

Clinical trials are an enormous bottleneck in drug development, and Kim and Reddy thought the AI-enabled software they’d been building at Pi Health could help do them faster and cheaper by expanding the pool of potentially eligible patients. But the